n_miss += 1 # Appending number of misclassified examples # at every iteration. if (np.squeeze(y_hat) - y) != 0: theta += lr*((y - y_hat)*x_i) # Incrementing by 1.

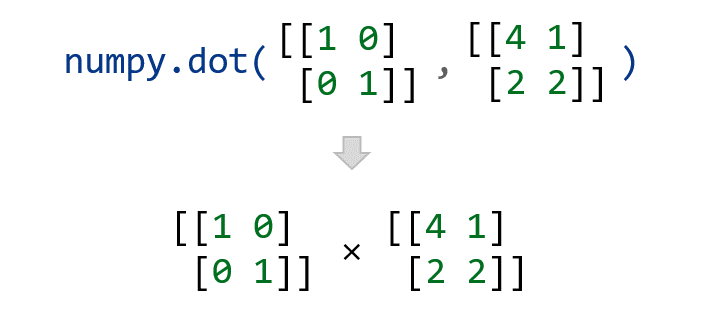

y_hat = step_func(np.dot(x_i.T, theta)) # Updating if the example is misclassified. x_i = np.insert(x_i, 0, 1).reshape(-1,1) # Calculating prediction/hypothesis. If these two input lists are not of equal length. for idx, x_i in enumerate(X): # Insering 1 for bias, X0 = 1. I need to write the function dot( L, K ) that should output the dot product of the lists L and K. for epoch in range(epochs): # variable to store #misclassified. theta = np.zeros((n+1,1)) # Empty list to store how many examples were # misclassified at every iteration. # m-> number of training examples # n-> number of features m, n = X.shape # Initializing parapeters(theta) to zeros. So we multiply the length of a times the length of b, then multiply by the cosine. b a × b × cos () Where: a is the magnitude (length) of vector a.def perceptron(X, y, lr, epochs): # X -> Inputs. We can calculate the Dot Product of two vectors this way: a Note that even though the Perceptron algorithm may look similar to logistic regression, it is actually a very different type of algorithm, since it is difficult to endow the perceptron’s predictions with meaningful probabilistic interpretations, or derive the perceptron as a maximum likelihood estimation algorithm.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed